Three Rules for Effective A/B Testing of Marketing Tactics

Would a short video on your webinar landing page increase conversions? Does an email with question in the subject line generate a bigger response? Does adding a limited-time element to a CTA improve click-through?

There are surveys and best practices articles that can provide guidance in making those decisions, but the only way to know for sure, for your company’s customers and prospects, is by testing those elements yourself.

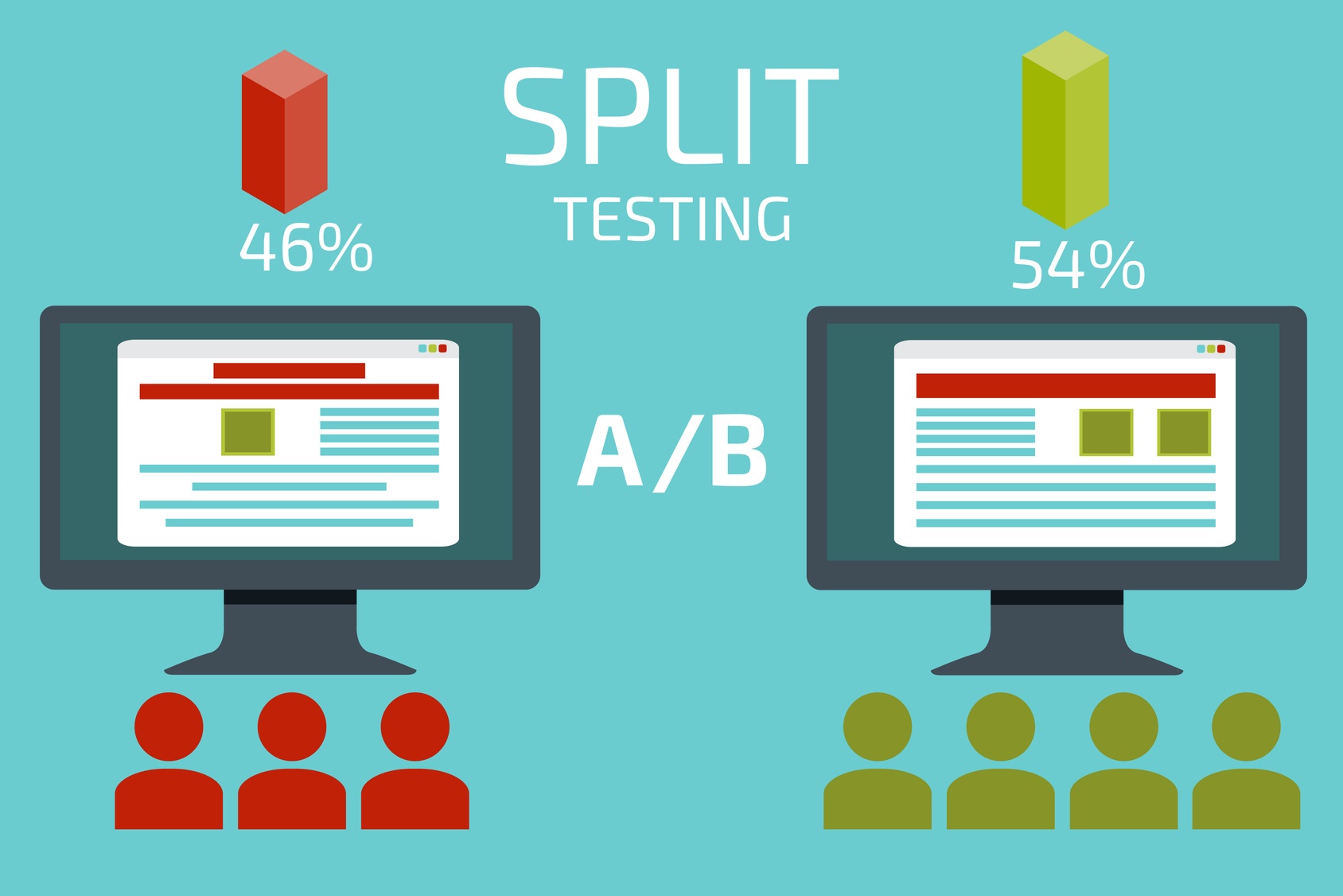

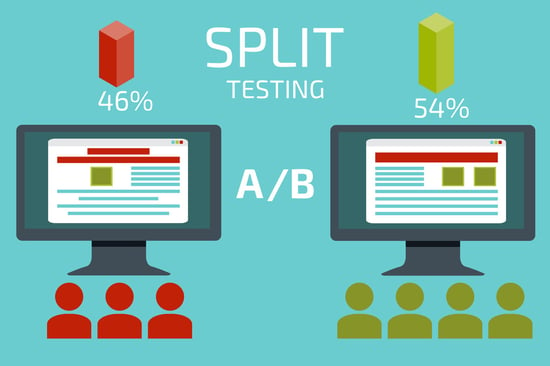

A/B testing, also called split testing, is a way to analyze two versions of a marketing tool to identify which option yields a better response rate. In this context, the existing version of the element that is being tested is the “control,” and the version you believe will give a better result is called the “treatment.”

Wondering what you can test? Check out the list here.

The first step is create two (or more) versions of the element you want to test, but changing only one aspect of that element. For example, you would craft an outgoing email just as you always do, then duplicate that email, but change the subject line. Then send the two emails to similar, randomly selected lists and see which generates the most clicks and conversions.

However, in order for your results to be accurate and useable, you need to follow three basic rules for A/B testing.

Rules for Effective Split Tests

1. Split your sample group randomly

In order to achieve accurate and conclusive results regarding which version of your tested element performs best, you need to test with two or more audiences that are equal.

This means splitting your sample group randomly, so that each segment is consistent with the other. For email tests, this would mean your lists are similar in:

-

Source (Did they subscribe to your blog? Sign up for email updates? Or is the list from a partnering business?)

-

Type (Are they customers? Leads? Do they share similar job titles or industries?)

-

Length of time the contacts have been on the list.

To effectively test landing pages, send equal numbers of visitors from all sources (social media, email, search) to each landing page.

If you are comparing the lists themselves, keep all other aspects of design and timing identical so you can get accurately results based solely on the list, without other elements factoring in to the findings.

2. Test both versions at the same time

Timing matters. To truly compare the results of two versions of an email, landing page or call-to-action, both versions need to be deployed simultaneously—the same time of day, same day of the week, same month of the year.

If you test Version A in April and Version B in May, you won’t know whether differences in response were due to the difference in the emails themselves, or a difference in the market and time of year.

3. Determine necessary variance before testing

Just as we say you need to do the math and set goals before starting an inbound marketing campaign, you need to know what level of results are needed in your A/B tests to warrant making changes to your website or email strategies.

Decide in advance:

-

How many visits/responses are needed overall

-

How much difference is needed in performance to signify actual improvement

For example, when testing an email template with two lists of 500 contacts each, you may decide in advance that in order to consider the test valid, you need:

-

At least 100 responses total (10 percent of the lists)

-

For one version to perform at least 50 percent better than the other.

By identifying these targets in advance, you can feel more confident in your results. For tests of elements such as call-to-action buttons or landing page designs, you could also use a threshold number of clicks or visits to determine when to end a test.

Find more on A/B testing:

- Four A/B Testing Mistakes to Avoid

- Do You Prefer ‘A’ or ‘B’? Use Testing to Improve Marketing

- Don’t Short-Change A/B Testing Efforts

Get details on setting up A/B tests for your marketing elements, along with case studies of successful split testing, in our Introduction to Using A/B Testing for Marketing Optimization.